By the time a sponsor reaches a payer or HTA submission for a treatment, the decision that shapes the outcome was made months — sometimes years — earlier, when the evidence source was selected.

The evidence source is the data infrastructure the study is built on: a claims database, a tokenized patient network, a site-based drug registry, or a patient-mediated record retrieval platform. Most HEOR teams treat that decision as a research operations question: which source is fastest to access, which vendor can stand up a study quickly, or which database covers the most patients. But source selection should be driven by the submission question: what payers and HTA bodies will actually ask of this evidence. The source determines what the evidence can answer. And once the source is locked, there is no recovering the data it was never designed to capture.

The dominant assumption is that source selection forces a choice: speed or depth, reach or rigor, operational simplicity or population representativeness. That assumption is wrong, and it's costing sponsors at submission.

Start with the submission question

Payers and HTA bodies pose questions like:

- How long are patients staying on this therapy, and why are they discontinuing?

- What does the safety profile look like across the full treated population, including patients managed in community settings?

- Is the effectiveness signal durable beyond the trial window?

- Does the comparator picture reflect how care is actually delivered?

These questions share a common structure. They are longitudinal. They are population-representative. They require endpoint fidelity: exposure timing, dosing changes, discontinuation reasons, adverse events captured with sufficient nuance to defend a claim, and provenance that traces from observed outcome back to source record. A source that cannot meet that structure cannot answer the question.

Three assumptions that don't survive submission

"More data means better evidence." Scale is what most large aggregators lead with — 100 million patients, 1.2 billion records, 4,000 datasets. Yet a claims database covering 80% of the U.S. insured population still cannot tell you why a patient stopped treatment, whether the dose was titrated, or what the patient's symptom burden actually was. Scale and depth are different problems, and payers care about depth.

"High match rates mean cohort preservation." Tokenization vendors routinely report match rates of 85–95%. But the usable analytic cohort (patients with adequate coverage, the right time windows, and measurable endpoints) frequently drops to a fraction. The dropout is not random: patients who change insurance, see community providers, or receive care outside contributing networks are systematically underrepresented. A 90% match rate that yields a 40% usable cohort is not cohort preservation. It is a bias generator.

"Site rigor produces submission-ready evidence." Academic medical centers produce data that is internally rigorous: adjudicated, structured, protocol-controlled. However, the rigor is bound by who walks into those sites. For most therapies, a drug registry built from academic and specialty sites systematically misses the patients on whom the treatment story is being written.

Most sponsors think they're choosing between two known options: claims for breadth, site-based registries for depth. The problem is that neither delivers what submission audiences actually require, and treating it as a binary means the real question, whether the source can answer the question at all, never gets asked.

Why the conventional options all fall short

The sources that address the specific question and hold up at submission tend to meet four criteria at once: follow patients across every provider, maintain a continuous longitudinal record, capture exposure with sufficient fidelity to defend safety and effectiveness claims, and surface what patients actually experience on treatment.

What every HEOR team navigates in practice is the cost of meeting these. Three trade-offs recur:

Speed vs. endpoint fidelity. A claims pull stands up in weeks but lacks the clinical depth to capture the endpoints submission audiences care about. A site-based registry capable of capturing that depth takes months to stand up. The cost of speed is paid at submission, when the audience asks a question that depends on a field the source could not record.

Reach vs. depth. A 100-million-patient aggregator is wider than any prospective study and shallower than what payers and HTA bodies now require. Reach without depth produces evidence that is defensible in volume and indefensible on the question.

Operational overhead vs. population reach. Site-based drug registries demand contracting, training, and maintaining every participating center. They’re slow to stand up, expensive to operate, and incur more costs whenever the protocol changes. And after that investment, the cohort is still bounded by who walks into those sites.

Off-the-shelf solutions permit this assumption: claims aggregators imply scale plus endpoint fidelity; site registries imply rigor plus representativeness. Neither package holds.

Designing for the question

Designing against the four criteria rules out most off-the-shelf options. The infrastructure that passes resolves those trade-offs. It is patient-mediated, longitudinal, anchored to people rather than tokens.

That is the foundation of PicnicResearch's evidence platform, built to support drug registries, post-market studies, and long-term follow-up programs that hold up at submission.

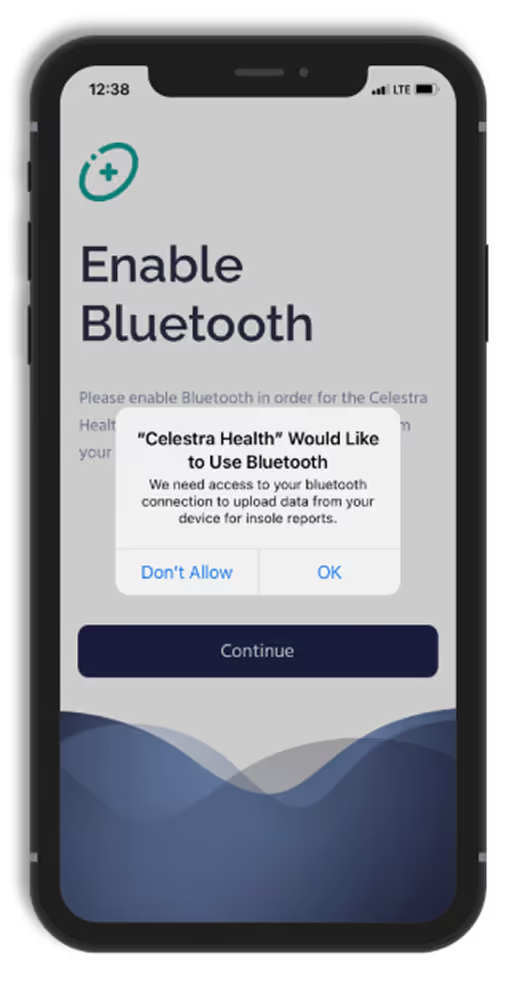

The speed-versus-depth problem: we use enrollment channels like specialty pharmacies to rapidly enroll and reach patients at the moment of therapy initiation. There is no site contracting, no training burden, no operational overhead that compounds with each protocol amendment. Stand-up is fast. The clinical depth is not sacrificed to get there.

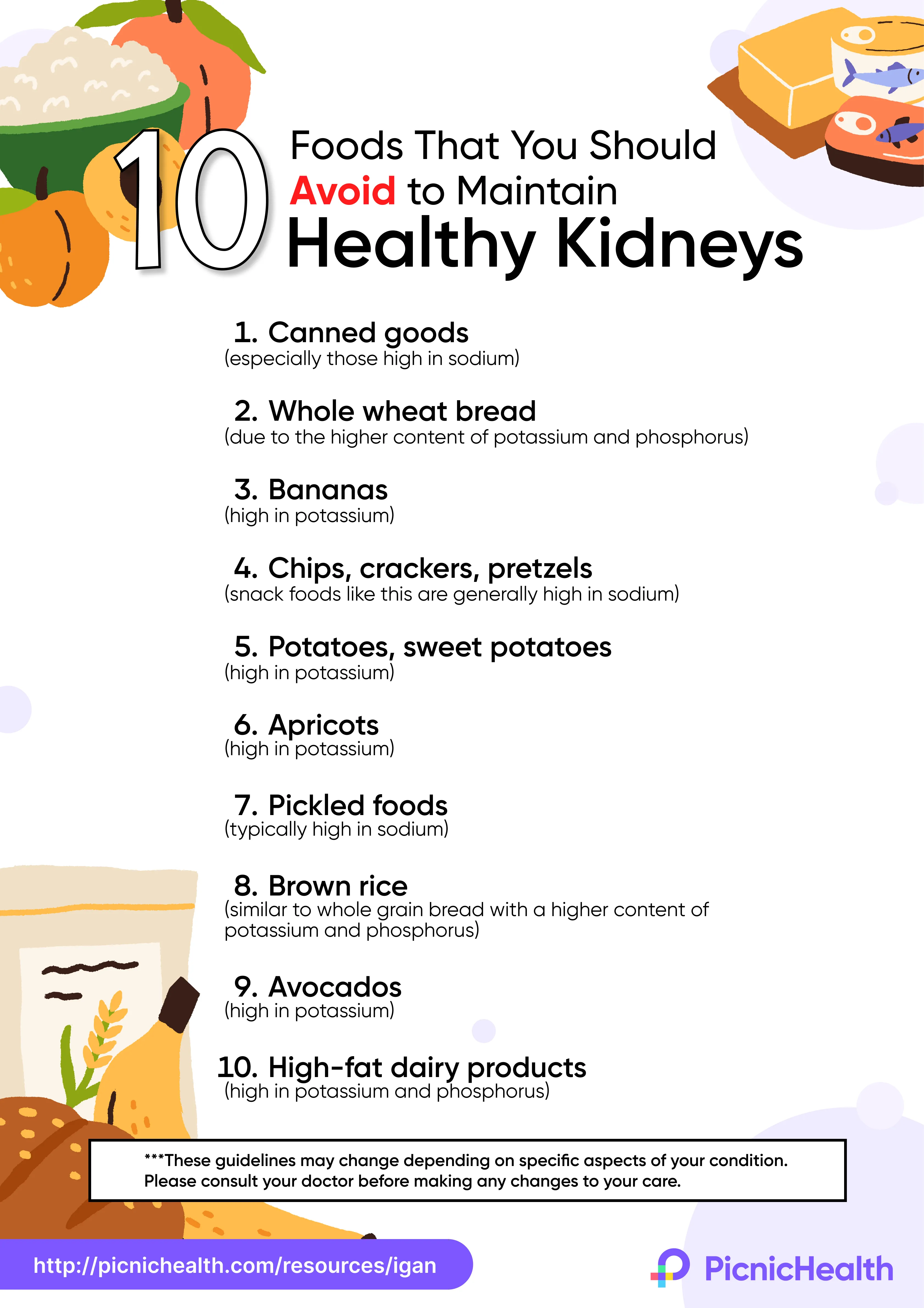

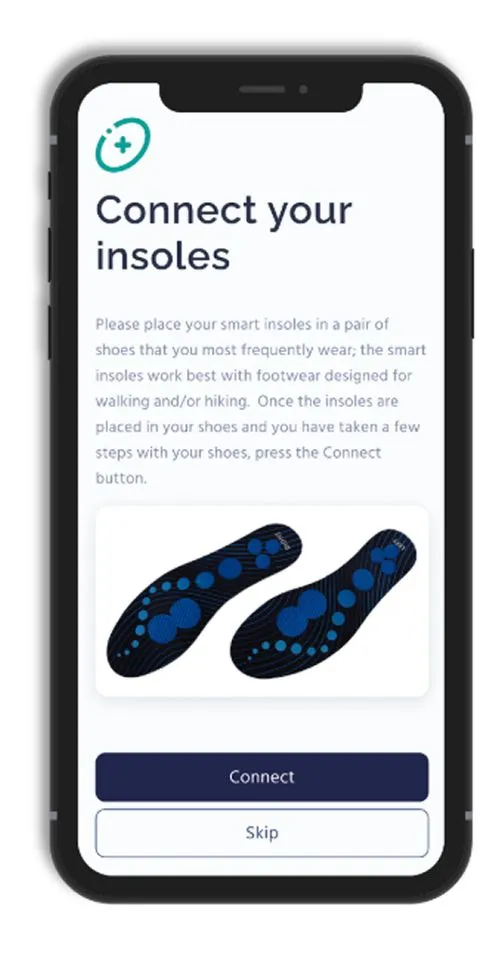

The reach-versus-depth problem: we use patient right of access to retrieve complete medical records across every provider a patient sees, including primary care, specialty, community, and hospital. The longitudinal history is assembled around the person, not reconstructed from tokens or bounded by network participation. A 100-million-patient aggregator is wider. Nothing matches this depth.

The population-reach problem: enrollment is not bounded by who walks into an academic center. Patients are reached through the treatment journey itself, which means the cohort reflects how the therapy actually performs across the full treated population, including the patients claims data misses and site networks never see.

The result is an ambispective, decision-grade dataset: multi-year retrospective retrieval surfaces baseline characteristics, prior lines, comorbidities, and the exposure detail required to confirm and defend a claim. Ongoing record refresh and direct patient engagement capture late-emerging safety signals, durability of response, and patient-reported outcomes across years of follow-up.

Cohorts remain intact across years of follow-up. Endpoints trace to source records, not surrogate measures or reconstructed claims. The four criteria stop being aspirational and become the actual architecture of the evidence.

Source selection is evidence strategy

Source selection is not an operations decision. It is the first evidence strategy decision a HEOR team makes, and it determines the shape of everything that follows.

The teams whose evidence holds up don't accept the trade-offs as fixed. They choose designs capable of answering the question.

Explore how PicnicResearch can help your evidence hold up at submission. Check our our webpage or contact us.